The Architect's Mindset: What Distributed Cognition Actually Looks Like

Three years of AI usage data revealed more than patterns—it revealed a fundamental shift in how I think, work, and architect my own cognitive infrastructure.

I. OPENING: BEYOND THE NUMBERS

In Part 1, I showed you the data: 42,000 messages across 3 platforms, patterns of evolution from user to builder to architect, an accidental optimization for API costs, and the interdisciplinary nature of my work.

But numbers tell only part of the story.

What do these patterns reveal about how I actually think? What does it mean to become an ‘architect’ of your own cognition? And what happens when thinking stops being individual and becomes genuinely distributed across human + AI infrastructure?

The data answered these questions—not through what I intended, but through what emerged.

This is the deeper dive into the transformation the archaeology revealed.

II. THE EVOLUTION

In Part 1, I outlined three phases: The User (2022-2023), The Creator (2024), and The Architect (2025-2026).

Quick reminder:

The User: 8 → 543 messages. Learning what’s possible, discovering how to communicate with AI.

The Creator: Created 23 Custom GPTs (13 public, 10 private). 46.8% of what I used, I built myself. Started thinking meta-cognitively—not just “what’s the answer?” but “what cognitive capability would help me think through this type of problem?”

The Architect: Migrated to TypingMind. 68 agents, 94.3% adoption. Stopped building individual tools, started thinking about how cognitive capabilities compose. 27.5% of conversations use multiple agents working together.

But what do these phases reveal about how cognition itself changed?

The shift wasn’t just using more AI, or using it differently. Each phase represented a fundamental change in cognitive relationship.

“The User” was about learning the language. “The Creator” was about authoring extensions of myself based on years of experience. “The Architect” was about designing systems where those extensions work together.

Connecting to the builder phase:

In August 2025, I wrote about developing a suite of AI assistants that work together through prompt chaining—SparkPlug for strategy, Compass Guardian for ethics, Spark for design, The Architect for product, DaVinci for UX, Trinity for project management.

That article captured the builder phase mid-flight. This analysis revealed what came after: those orchestration patterns became my default way of working. Not just for special projects—for 94.3% of everything I do in TypingMind.

III. HOW I ACTUALLY THINK

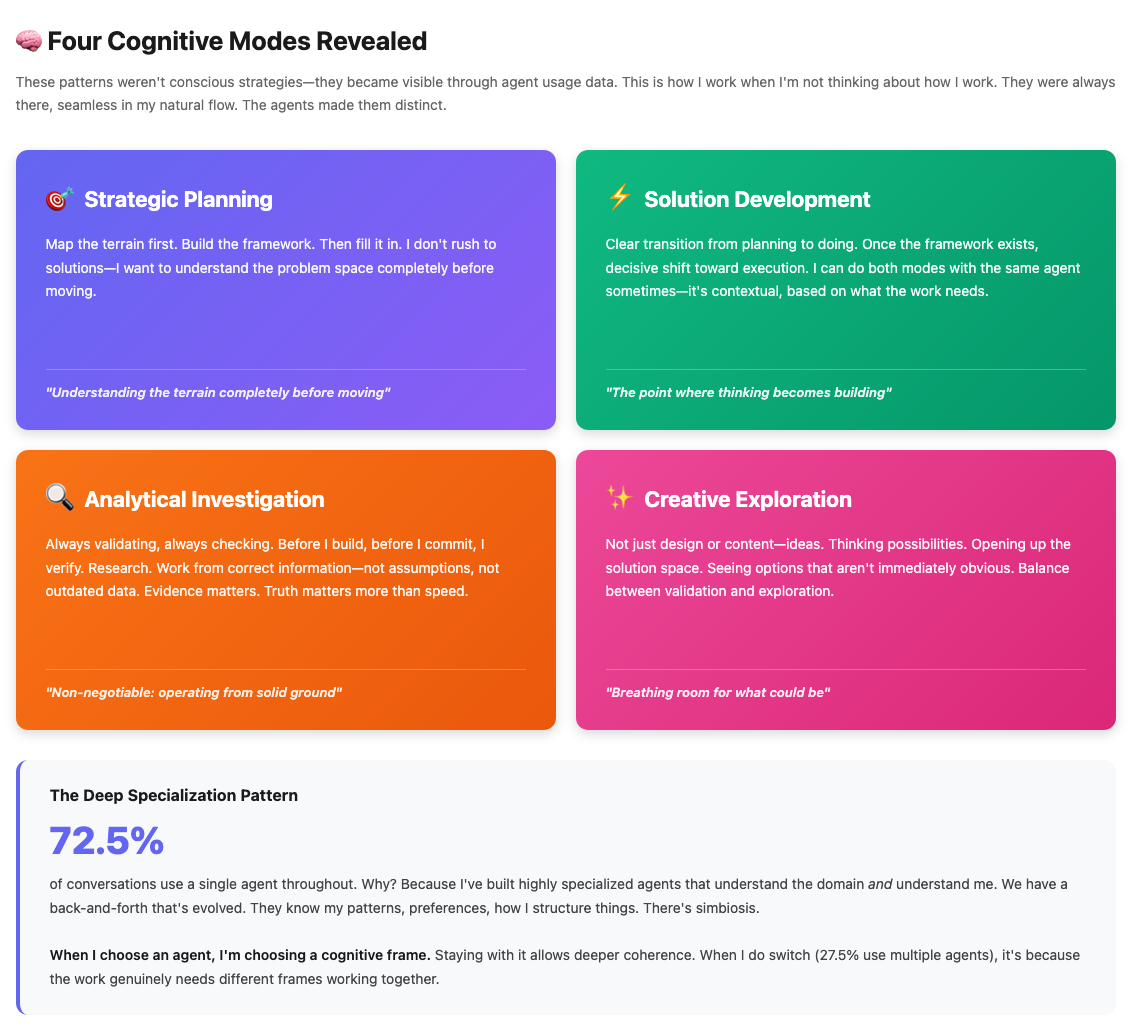

Looking at agent usage patterns revealed four modes in how I approach work.

I already work this way—moving between skills depending on context, what the moment needs. But it all blended together. The agents made these patterns distinct. Visible.

Four Patterns That Became Clear

Strategic Planning

Map the terrain first. Build the framework. Then fill it in.

I don’t rush to solutions—I want to understand the problem space completely before moving.

Solution Development

But I don’t stay in planning mode. There’s a transition point where thinking becomes building, where strategy becomes execution.

The interesting part: I can do both with the same agent sometimes. It’s contextual. I don’t always need to switch—the mode shifts based on what the work needs in that moment.

Analytical Investigation

This is critical for how I work: always validating, always checking.

Before I build, before I commit, I verify. Research. Validate assumptions. Work from correct information—not outdated data, not what seems obvious.

Investigation agents show this clearly. Evidence matters. Truth matters more than speed.

This is non-negotiable: I need to know I’m operating from solid ground.

Creative Exploration

Not just design or content—ideas. Thinking possibilities. Exploring what could be.

Opening up the solution space. Seeing options that aren’t immediately obvious. Imagining different approaches before committing to one.

This mode gives breathing room to the analytical mode. Balance between validation and exploration.

The Deep Specialization Pattern

72.5% of my conversations use a single agent.

Why?

Because I’ve built highly specialized agents for specific domains.

Product agents understand product frameworks. Strategy agents know how I think about strategy. Design agents have the mental models for UX work.

But here’s what matters: these agents understand how I work. Not just the domain—me.

We have a back-and-forth that’s evolved. They know my patterns, preferences, how I structure things. They understand what I need and why I need it the way I need it. There’s symbiosis.

That’s why I stay with one agent per conversation. I’ve already chosen the right cognitive frame. The agent knows the domain and knows me. Switching would lose that context.

And when I do switch (27.5% of conversations use multiple agents), it’s because the work genuinely needs different cognitive frames working together—strategy → product → design, each handling their layer.

What This Actually Means

These patterns—strategic then tactical, analytical yet creative, deep over broad—they were always there.

Years working across different components of what I’ve learned: product development, business strategy, AI implementation, UX design.

The patterns existed, but they were indistinguishable in the flow of work. Everything blended. I move between modes and disciplines fluidly, contextually, based on what each situation needs.

The agents separated them. Made them visible. Gave each mode a clear form.

Now I can see: “This is strategic mode. This is analytical mode. This is where I switch from planning to building.”

The data revealed patterns I couldn’t see clearly before—not because they were hidden, but because they were seamless in how I naturally work.

The agents act as amplifiers and expanders of what I already know and do. I understand my limits. I know where my gaps are. The agents complement those gaps, expand my capabilities without offloading critical thinking.

IV. THE EXTENDED MIND IN PRACTICE

The Tool Activation Reality

30.5% tool usage. 7,873 tool calls.

Who invokes them?

It depends.

Sometimes I explicitly request a tool. “Use Perplexity for this research.” Directed.

Other times—Sequential Thinking, certain Mermaid diagrams, other tools—they activate automatically when the agent determines they’re needed for the task.

It’s contextual collaboration, not full automation.

The agents have judgment about when tools help. I have override authority when I need specific capabilities.

This balance is important. I’m not hands-off. I’m leading.

Human-Led AI Workforce

At Somefail, we developed a concept in January 2025: Human-Led AI Workforce.

The premise: Instead of replacing people, humans lead AI teams to handle higher-value work. AI executes repetitive tasks with precision. Humans focus on creative, strategic, high-value thinking.

I’ve built this for myself.

I lead a team of 68 specialized agents in TypingMind that expand my capabilities:

They handle research (Perplexity, Exa, Deep Researcher)

They structure reasoning (Sequential Thinking)

They visualize concepts (Mermaid, charts, HTML)

They manage tasks (Jira, Confluence, TODOs)

I handle:

Synthesis (what does this research mean?)

Strategy (where should we go?)

Judgment (is this the right direction?)

Creative direction (what should this feel like?)

The thinking is distributed, but I’m conducting.

Not offloading cognitive capacity. Expanding it.

Critical thinking stays with me. Decision-making stays with me. The agents expand what I can do with that thinking.

Post-Individual Cognition

Here’s what became clear through the data:

My thinking isn’t individual anymore.

When I work on a complex problem, the cognition is genuinely distributed across:

Human intent: What I want to solve, the direction I’m heading

AI execution: Agents reasoning through the problem

Tool activation: Capabilities deployed (sometimes by me, sometimes automatically)

68 agents: Each a specialized cognitive module in TypingMind

3 platforms: Infrastructure layers enabling different thinking modes

This isn’t metaphor. It’s how the work actually happens.

I don’t think with AI (like using a tool).

I don’t think about AI (meta-level analysis).

I think through AI—as cognitive extension.

The distinction matters. When thinking is distributed this way, asking “who solved the problem?” doesn’t have a clean answer. It’s not “me” or “the AI.” It’s the system—human + agents + tools + platforms integrated.

This is post-individual cognition: thinking that exists in the interaction between human and multiple specialized AIs, not in either alone.

The Question of Self

When cognition is genuinely distributed, what is the “I” that thinks?

This isn’t abstract philosophy—it’s a practical question that emerges from working this way.

If thinking happens across human + 68 agents + tools + platforms, and solutions emerge from the interaction between these components, where exactly is the “me” in that process?

I said earlier: “Critical thinking stays with me. Decision-making stays with me.”

But I also said: “Asking ‘who solved the problem?’ doesn’t have a clean answer.”

These statements are in tension. And I don’t have that tension fully resolved.

What I know:

The locus of intention is human. I decide what problems matter. I set direction. I determine what “good” looks like.

The values and judgment are human. When agents propose solutions, I evaluate them against criteria only I hold. What’s ethical? What’s valuable? What aligns with goals only I can define?

The continuity across sessions is human. I’m the through-line connecting yesterday’s work to today’s. The agents reset. I persist.

But the execution? That’s distributed.

When Sequential Thinking structures an argument, when Perplexity gathers research, when agents generate frameworks and solutions—those cognitive acts happen in the AI layer.

The synthesis that emerges when I work with that output? That’s the interaction. Human judgment + AI execution. Not purely mine, not purely the system’s.

What I’m uncertain about:

When a solution emerges from this collaboration—agent reasoning + tool outputs + my synthesis—what percentage is “mine”?

Is the “I” the one who establishes direction and purpose, while execution is distributed?

Or is the “I” more like a conductor—not playing the instruments, but determining what the orchestra plays and how?

Maybe the question itself is wrong. Maybe “me” isn’t a fixed location—it’s the pattern of intention and judgment that flows through the distributed system.

I don’t have a clean answer.

What I know: the work that emerges feels authentically mine. The direction, the values, the judgment—those are human. The system expanded what I can do with those, but didn’t replace them.

The “I” that thinks might not be individual anymore in execution. But it’s still individual in what it chooses to think about and why.

That’s the best I can articulate right now. The data revealed the distribution. The philosophy is still catching up.

What Extended Mind Actually Feels Like

Before this infrastructure, certain tasks would take much longer. Or I’d hit analysis paralysis and not do them.

Now, I have the components I need to advance.

Need market research but don’t have time for deep data gathering? Agents handle that. I focus on strategic implications.

Need to structure messy thinking? Voice dump the rawness (MacWhisper + TypingMind). Agents help find the structure.

Need validation before committing? Investigation agents bring evidence.

I’m not doing less thinking—I’m thinking at a higher level because execution-layer work is handled by infrastructure.

The agents unblock me. They give me components I was missing—without external dependencies, without waiting, without the friction that used to create paralysis.

The system expanded what’s possible for me to do, alone.

But “alone” doesn’t mean what it used to. My cognition is distributed. The “me” that solves problems is human + 68 agents + tools + platforms working as integrated system.

That’s what post-individual cognition looks like in practice.

V. INTERDISCIPLINARY BY NATURE

77.8% of my TypingMind conversations span multiple categories.

This isn’t strategy. It’s natural consequence.

Problems don’t arrive categorized. Market research raises AI questions, which surface UX considerations, which require development thinking. The categories aren’t separate—they’re interconnected layers.

The strongest connection: AI + Design appear together in 82 conversations (43.5% affinity).

I don’t think “build AI” separate from “design UX.” I think: “How does AI enable UX? How does UX shape what AI should do?” Same question, different angles.

Why this matters for distributed cognition:

Because problems at intersections require cognitive infrastructure that can move fluidly between domains.

An agent specialized only in AI wouldn’t handle the UX questions. A design agent alone wouldn’t understand AI constraints.

The interdisciplinary work requires distributed infrastructure—multiple specialized agents that together cover the terrain I actually work across.

The 77.8% overlap isn’t accidental. It’s consequence of:

My background spanning these domains (business strategy, AI development, UX design, product)

Work that naturally lives at intersections

Cognitive infrastructure built to handle that reality

I don’t work in domains. I work at intersections. The infrastructure reflects that.

VI: WHAT GETS LOST

Not everything about distributed cognition is gain.

There are trade-offs I’m starting to notice. Things that shift when thinking becomes this integrated with infrastructure.

Cognitive Dependency

What happens if I lose access to these tools?

It’s a real question. With 94.3% of my TypingMind work using agents, with 30.5% involving automatic tool activation, with thinking genuinely distributed across this infrastructure—there are capabilities I now exercise through the system that I’m not sure I still have without it.

Could I still do deep market research without Perplexity auto-gathering data? Probably. But slower, and I’d feel the friction.

Could I structure complex reasoning without Sequential Thinking scaffolding? Yes. But I’ve gotten used to that support.

The dependency is real. And I’m not sure if that’s a problem or just the natural evolution of how humans have always worked—we depend on our tools. Calculators, search engines, even writing itself. This is just the next layer.

But it’s worth acknowledging: I’ve built infrastructure I now depend on.

Memory Fragmentation

Right now, my memory is split:

ChatGPT: Years of context about how I work, patterns from 2022-2026

TypingMind: Agent-specific memory, project context, recent work

Claude Desktop: Emerging memory, technical workflows

This fragmentation creates friction. An agent in TypingMind doesn’t know what happened in ChatGPT. ChatGPT doesn’t have context from my TypingMind work.

The continuity of “me” across platforms is broken.

Not catastrophically. But noticeably. I’m the only through-line connecting these memory systems. The platforms don’t talk to each other.

This is something I’m experimenting with—how to normalize memory across infrastructure. But for now, the distributed system has fragmented memory, and that has costs.

The Overhead of Maintaining Infrastructure

68 agents. 3 platforms. Multiple models. Subscriptions to manage. Memory systems to maintain.

This has cognitive overhead.

Knowing which agent to use when. Remembering which platform has which capabilities. Managing context across fragmented memory.

Is it worth it? For me, yes—the cognitive range justifies the complexity.

But it’s honest to recognize: there’s a cost to distribution. It’s not free cognitive expansion. It requires maintenance, orchestration, deliberate architecture.

Simpler workflows have their advantages. I’ve chosen complexity because it serves my work. But that’s a trade-off, not pure upgrade.

The Authenticity Question

When output emerges from human + AI collaboration, is it “authentically mine”?

My answer: Yes. Because I direct the intention, provide the judgment, determine what’s valuable.

But I know others ask this question. When agents structure my raw voice dumps, when they generate frameworks I then refine, when solutions emerge from the interaction—where’s the boundary between my thinking and the system’s output?

I don’t lose sleep over this. The work feels mine because the direction, values, and judgment are mine. The agents execute what I guide them toward.

But it’s a valid question in an era where AI-generated content floods everything. Authenticity matters.

My stance: authenticity is in the intention and judgment, not in whether every word was manually typed. But I understand why the question exists.

Not all of these are problems I need to solve. Some are just realities of working this way—trade-offs I’ve accepted because the gains outweigh the costs.

But Part 2 would be incomplete without acknowledging: distribution has shadows, not just light.

The transformation is real. But so are the complexities that come with it.

VII. WHAT THIS REVEALS

I’ve Become a Human-AI Cognitive System

This isn’t metaphor or philosophical musing.

It’s operational reality.

94.3% of my TypingMind conversations use agents

Agents activate tools automatically (30.5% tool usage I don’t always manually invoke)

Work spans 68 specialized agents in TypingMind, plus Custom GPTs in ChatGPT, plus artifacts in Claude Desktop

Thinking happens through this infrastructure, not just with it

When I solve a complex problem now, the solution emerges from the interaction between:

My judgment and intent → Agent reasoning and execution → Tool capabilities deployed → Context and memory across platforms

This is distributed cognition at work.

The system isn’t external to my thinking—it’s integrated with it. The agents aren’t assistants I consult. They’re cognitive extensions I think through.

Optimizing for Cognitive Range, Not Efficiency

Looking across all the patterns—multi-platform, multi-model, multi-agent, multi-disciplinary—a meta-pattern emerges:

I optimize for cognitive range, not efficiency.

Most people consolidate. One platform, one model, one general-purpose assistant. Simpler workflow.

I maintain:

Multi-platform (3 platforms, each enabling different modes)

Multi-model (Claude, Gemini, GPT—contextual selection)

Multi-agent (68 agents in TypingMind, not relying on a single general assistant)

Multi-disciplinary (77.8% work crosses domains)

Why maintain this complexity?

Because cognitive diversity requires infrastructure diversity.

Each platform enables different thinking modes:

ChatGPT: Years of memory, quick context-aware responses, Custom GPTs

TypingMind: Multi-model access, 68 specialized agents, deep research capabilities

Claude Desktop: Artifacts for iterative co-creation, technical workflows

Consolidating would simplify the workflow but reduce cognitive capability.

I’m willing to maintain the overhead because each component unlocks something the others don’t.

Platform Fluidity as Philosophy

I migrated from ChatGPT (100% usage) → TypingMind (61.6%) in February 2025.

But I didn’t abandon ChatGPT. I expanded the portfolio.

Added capabilities without discarding what still worked. ChatGPT stayed relevant for quick tasks, legacy memory, Custom GPTs. TypingMind became primary for deep work. Claude Desktop remained available for artifacts and technical needs.

This mirrors how I think about cognitive tools: composable, best-of-breed, adaptable.

Like modern software architecture—specialized services that integrate, not monolithic solutions.

The platform migration wasn’t replacement. It was portfolio expansion. More capabilities available, contextually deployed based on what each situation needs.

VIII. CLOSING: THE META-PATTERN

Co-Evolution Between Tools and Cognition

Stepping back from the individual patterns—the evolution, the cognitive modes, the distributed thinking, the interdisciplinary work, even the shadows—the unifying principle becomes clear:

Co-evolution between tools and cognition.

Not tools adapting to how I think. Not me adapting to use tools.

Both transforming together, recursively.

Here’s how it actually works:

The interdisciplinary work required specialized agents.

Building those agents changed how I think about problems—more meta-cognitively, more architecturally.

Thinking meta-cognitively revealed the need for better orchestration (one agent → multiple agents working together).

Better orchestration enabled handling more complex, multi-layered problems.

More complex problems required even more specialized infrastructure (68 agents, not 23).

More specialized infrastructure enabled new cognitive modes (voice input, brain dump, agents structuring rawness).

New cognitive modes opened up different kinds of work (that I couldn’t do before, or would have avoided due to friction).

Different work revealed new needs, which drove building more infrastructure.

And the cycle compounds.

It’s not linear progression. It’s spiral—each element reinforcing the others, the system growing more capable, my thinking evolving with it.

The system isn’t static infrastructure I use.

It’s living architecture that evolves with my thinking—because it IS part of my thinking now.

The tools shaped me. I shaped the tools. We shaped each other.

That’s the meta-pattern the data revealed.

What the Archaeology Actually Revealed

In Part 1, I showed you numbers. In Part 2, I’ve shown you what those numbers mean.

The transformation isn’t just about using more AI, or using it differently.

It’s about how thinking itself changes when it becomes distributed. When 68 specialized agents become cognitive infrastructure. When platforms enable different modes of cognition. When voice unlocks unfiltered ideation. When problems live at intersections that require fluid movement between domains.

From individual cognition → distributed cognition.

From consuming tools → building extensions → architecting systems.

From thinking alone → thinking through infrastructure.

These 42,000 messages documented a mind learning to operate at distributed scale.

This Probably Isn’t Unique to Me

If you work deeply with these tools over time, this transformation is probably happening to you too.

Maybe you haven’t analyzed the data. Maybe the patterns aren’t visible yet.

But the shift from using AI → thinking through AI—that’s likely underway whether you notice it or not.

The archaeology just made it conscious for me.

Visible. Articulable. Something I can now understand and architect deliberately instead of letting it happen unconsciously.

The Real Insight

I haven’t just adopted AI.

I’ve become a human-AI cognitive system.

And once you see that clearly—once the data shows you the transformation that’s already happened—you can’t unsee it.

You start asking different questions. Not “how do I use AI better?” but “how do I architect my cognitive infrastructure deliberately?”

Not “which tool should I use?” but “what capabilities do I need, and how should they integrate?”

Not “am I using too much AI?” but “is my distributed cognition optimized for the work I actually do?”

But here’s what also became clear:

In an era where new AI tools, products, and capabilities launch constantly, you have to sharpen your criteria.

What’s worth testing? What’s worth integrating? What’s a tool that expands capability vs. a toy for experimentation?

Both are valid. But you need to know the difference.

Understanding what you actually want to do and what value you want to create—that’s what lets you navigate the noise. Not chasing every shiny new model or feature, but building infrastructure aligned with your work.

The 68 agents, the 3 platforms, the contextual model selection—these emerged because I got clearer about what matters: expanding cognitive range for the work I actually do, not collecting tools for their own sake.

The questions change because the relationship changed.

And the data proved it had already happened—long before I analyzed it.

Three years in, the patterns are undeniable.

I don’t use AI.

I think through it.

Tech Stack for This Article

Creating this piece involved a blend of human reflection and technological assistance. Here are the key tools used in the process:

MacWhisper: For capturing initial thoughts (in Spanish and English) via stream-of-consciousness dictation, feeding directly into the AI assistant.

Personal AI Content Assistant (Built by me using Typing Mind Powered by Claude Sonnet 4.5 (1M)): This custom assistant took dictation and supported the writing process end-to-end—brainstorming, structuring ideas, serving as a thought partner, and refining drafts.

Visual Studio Code + Cloude Code: Used as the development environment to process Eric’s script and kick off a structured project focused on consolidating and processing my personal data across multiple platforms. This setup allowed me to experiment, iterate, and begin transforming dispersed information into a coherent, programmable system.